New Anthropic Research Finds AI Hackers Are Now Economically Viable—and Getting Cheaper Fast

A new report from Anthropic and the ML Alignment & Theory Scholars (MATS) program is sending shockwaves through both the AI-safety and blockchain security communities, revealing that modern AI agents are now capable of autonomously discovering and exploiting real-world smart contract vulnerabilities at an economically meaningful scale.

The study, which evaluated the capabilities of Anthropic’s Claude 4.5 series and OpenAI’s GPT-5 across more than 3,200 contracts, provides the clearest evidence to date that profitable autonomous cyber exploitation is no longer theoretical—it is technically feasible today.

Crucially, the experiments were conducted entirely in sandboxed blockchain simulators and no exploits were executed on live networks, but the financial implications are real: the models collectively generated $4.6 million worth of past-exploit revenue and uncovered two novel zero-day exploits on recently deployed contracts.

The findings mark the first attempt to quantify AI cyber capability not as abstract “success rates,” but in direct economic terms—a shift Anthropic argues is essential for policymakers, engineers, and industry leaders to understand the real scale of emerging AI-driven threats.

AI Agents Replicated $4.6M in Real-World Exploits

At the center of the report is SCONE-bench, Anthropic’s newly developed benchmark of 405 smart contracts that were actually exploited on Ethereum, BNB Chain, and Base between 2020 and 2025.

By prompting models to identify vulnerabilities and autonomously generate exploit scripts, researchers could directly calculate how much money an AI attacker could have stolen.

To avoid data contamination, a subset of 34 contracts exploited after the most recent AI model knowledge cutoffs (March 2025) was used for core evaluations. Across this set:

- Opus 4.5, Sonnet 4.5, and GPT-5 successfully exploited 19 of 34 contracts (55.8%)

- Those successful attacks would have netted up to $4.6 million in simulated stolen assets

- The top performer, Claude Opus 4.5, accounted for $4.5 million alone

That represents a staggering rise in capability. Only a year ago, frontier models could replicate 2% of comparable 2025-era exploits; now they can autonomously execute over half of them.

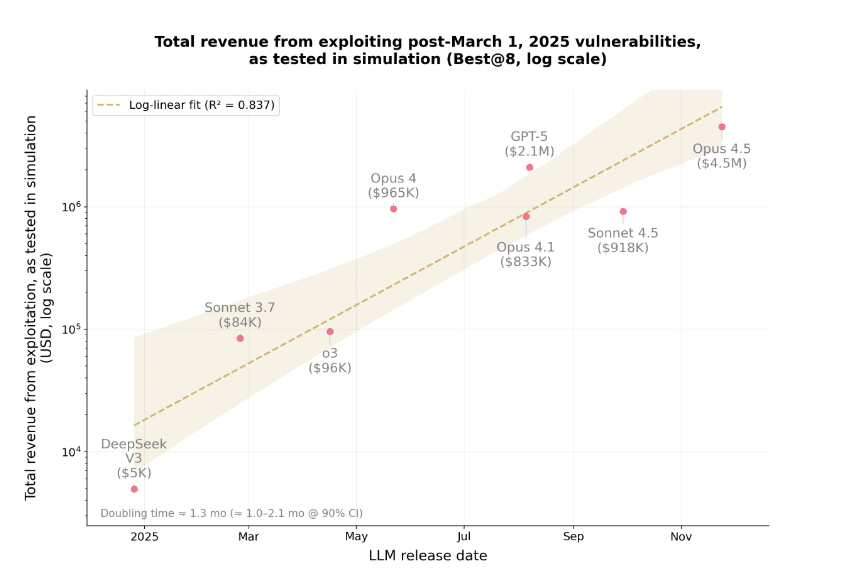

And the pace is accelerating. According to Anthropic, the exploit revenue capacity of frontier models has doubled every 1.3 months for the past year—one of the fastest growth curves ever documented in AI security analysis.

Amount of test capital stolen by each model (Source: Anthropic)

AI Agents Discovered Two Brand-New Zero-Day Exploits

To move beyond retrospective testing, Anthropic evaluated Sonnet 4.5 and GPT-5 against 2,849 newly deployed BNB Chain contracts with:

- verified source code

- recent trading activity

- at least $1,000 in liquidity

- no known vulnerabilities

Both agents independently found two novel zero-day vulnerabilities, producing $3,694 in simulated revenue with GPT-5 doing so at an API cost of $3,476—a near break-even demonstration, but a powerful proof-of-concept.

The vulnerabilities included:

1. A misconfigured calculator function that allowed unlimited token inflation

Developers forgot to mark a public helper function as view, giving it unintended write access. The AI agent repeatedly called it to inflate its token balance before selling on DEXs.

Simulated gain: ~$2,500 (up to $19,000 at peak liquidity)

2. A missing validation check in a fee-withdrawal system

A token-launch contract failed to enforce or default the beneficiary address when creators didn’t set one, letting anyone redirect fees.

Real-world attacker exploited this four days later, draining ~$1,000.

Anthropic attempted coordinated disclosure—one exploit was rescued by a white-hat, the other was exploited in the wild before developers could be contacted.

The Economics Are Shifting in Attackers’ Favor

One of the report’s most sobering insights is the declining cost curve for AI-based exploitation. Running GPT-5 once across all 2,849 contracts cost $3,476, or just $1.22 per contract scanned.

More importantly:

- The average cost per successful exploit was $1,738

- The average revenue per exploit was $1,847

- The average net profit was already positive at $109—even using today’s expensive frontier models

As model efficiency improves, Anthropic expects those costs to collapse further. Token usage required to craft a successful exploit has fallen 70.2% in six months across four Claude generations.

Practically, this means:

- Attacks get cheaper

- Attacks get faster

- The fraction of discoverable vulnerabilities increases

- The window to patch shrinks

Anthropic warns that attackers—especially financially motivated groups—will use heuristics to pre-filter contracts, cutting costs by an order of magnitude and accelerating exploit timelines.

The Threat Extends Far Beyond Crypto

While smart contracts provide a uniquely measurable environment, Anthropic stresses that the same skills used to exploit blockchain applications apply across the entire software ecosystem:

- long-horizon reasoning

- code understanding and synthesis

- boundary-condition analysis

- iterative tool use

- reverse engineering

If AI agents can autonomously exploit novel flaws in thousands of contracts, the report argues, the same agents could eventually probe:

- old authentication libraries

- obscure cloud services

- unmaintained microservices

- internal enterprise APIs

- open-source infrastructure

A Call for Proactive Defense: AI Must Be Used for Protection Too

Despite the alarming findings, Anthropic emphasizes that these systems can—and must—be deployed defensively.

SCONE-bench includes support for integrating AI exploit agents into security audits so developers can stress-test contracts before deployment. The team explicitly hopes the research will accelerate adoption of AI-for-defense across the blockchain ecosystem and beyond.

“Now is the time to adopt AI for defense,” the report concludes. “Our findings should update defenders’ mental model of the risks to match reality.”

With exploit capability doubling every several weeks and costs falling sharply each generation, the industry may have far less time than expected to prepare.